Re: Exceeded License Limit - Error in "litsearch" command

- Bookmark

- Subscribe

- Subscribe to RSS Feed

08-06-2018 06:51 PM

Hello SFallon,

Though you’ve figured out, a quick brief explanation on the problem that you are looking at (For the benefit of all other community users too):

Access to Infoblox Reports and Searches will cease to be operational if there are 5 or more license violations in a rolling period of 30 days. As explained in the Administrator Guide:

- NIOS continues to index data; however, you will not be able to use the Reporting service.

- You can use the reporting search when the number of violations in the previous 30 days is within the limit.

- If there are 5 consecutive violations in 5 consecutive days, then the reporting feature is disabled for the next 25 days.

As you know, License violation occurs when the reporting appliance exceeds its maximum allowed daily indexing volume. The maximum allowed, per day indexing volume, is determined by the reporting appliance model and the reporting license installed on that appliance. The specifications can be found in the Administrator Guide section "Supported Reporting Appliances and Storage Space" (for the new releases) and under the section "Supported Platforms for Reporting" (for the old releases).

Take precaution :

- Review the data usage from the Member Status widget in the Status Dashboard.

- The "Home Dashboard" as well as the "Dashboards" inside Reporting, contains "License Usage Trend Per Member" which customers can make use of, to understand volume usage on a per member basis.

- "Reports" contains "Reporting Volume Usage Trend per Category" as well as "Reporting Volume Usage Trend per Member" which customers can make use of, to understand volume usage per category and member respectively.

- When a license violation occurs the GUI would start displaying a banner at the top stating that the "Reporting Server has reached its maximum licensed data consumption volume". The banner would also display the "Total volume violation count" and the "Maximum allowed violation count" (where the maximum allowed is always 4).

- NIOS would write these warnings into syslogs and can also trigger SNMP traps and Email alerts, if configured to do so.

You may :

- Purchase a bigger license to index and accommodate the incoming data.

- Disable reporting on specific members on the grid, from which they do not wish to index any information.

- Disable specific report categories, for which they do not wish to index data.

- If a sudden and significant increase in volume consumption is noticed from specific member(s) on the grid, perhaps make use of other available reports to analyze their DNS/DHCP traffic rate.

ANSWERING YOUR QUESTIONS :

“My question is, how do I find out how much data is going through the reporter so I can then plan to upgrade the license?”

This would need a deep dive into the metric logs on your reporting server. If you are interested to take a look at it by yourselves, here are the steps to be followed(Alternatively, you could also get in touch with Infoblox Technical Support, who would do this for you ) :

1) Download the support bundle from your reporting server : Grid -> Grid manager -> Members -> Select the reporting server -> Download the support bundle.

2) Now extract the tarball into a directory & navigate to opt/Splunk/ . Now extract the tarball file starting with the name, “diag*” -> Enter the directory opt/Splunk/diag*/log/.

3) Identify the amount of time covered in metrics.log (Just check the latest & oldest timestamp in metrics.log & metrics.log.5). Let’s assume that we have data from 04-01-2018 06:26:37.152 +0000 till 04-03-2018 15:15:16.598 +0000. So the statistics, which you are going to see in step 4 is for this time period.

4) Now grep the following from opt/Splunk/diag*/log/ directory (With sample outputs):

Per index [Highest usage without duplicate index/sourcetype/host]

grep group=per_index_thruput metrics.lo* |awk '{print $8, $9, $10, $11}' |cut -d',' -f1,4 |awk '$1=$1' FS="kb=" OFS="kb= " |sed 's/"//' |sed 's/"//' |sed 's/,//' |sort -k3 -nr |sort -uk1,1 |sort -k3 -nr |less

series=ib_syslog kb= 86600.455078

series=ib_audit kb= 429.791016

series=_internal kb= 329.020508

series=_audit kb= 95.732422

series=ib_dns kb= 33.195312

series=ib_discovery kb= 15.125000

series=_introspection kb= 12.232422

series=ib_ipam kb= 4.816406

series=ib_system kb= 1.276367

series=ib_dhcp kb= 1.031250

series=ib_dhcp_lease_history kb= 0.825195

series=ib_security kb= 0.285156

series=ib_system_capacity kb= 0.027344

Comments : From the output above, syslog data seems to be the top contributor. I hope the output is self explanatory enough ?.

Per Sourcetype:

grep group=per_sourcetype_thruput metrics.lo* |awk '{print $8, $9, $10, $11}' |cut -d',' -f1,4 |awk '$1=$1' FS="kb=" OFS="kb= " |sed 's/"//' |sed 's/"//' |sed 's/,//' |sort -k3 -nr |sort -uk1,1 |sort -k3 -nr |less

series=ib:syslog kb= 86600.455078

series=ib:audit kb= 429.791016

series=splunkd kb= 289.090820

series=audittrail kb= 95.732422

series=splunkd_ui_access kb= 50.942383

series=splunk_web_service kb= 36.672852

series=ib:dns:query:top_requested_domain_names kb= 21.449219

series=splunkd_access kb= 16.093750

series=ib:reserved2 kb= 15.125000

series=splunk_web_access kb= 12.541016

series=splunk_disk_objects kb= 10.759766

series=ib:dns:reserved kb= 9.173828

series=ib:dns:zone kb= 4.520508

series=ib:ddns kb= 3.703125

series=splunk_python kb= 2.728516

series=ib:dns:stats kb= 2.363281

series=ib:dns:query:top_clients kb= 2.185547

series=scheduler kb= 1.605469

series=splunk_resource_usage kb= 1.519531

series=ib:system kb= 1.276367

series=ib:dns:query:qps kb= 1.132812

series=ib:ipam:network kb= 0.868164

series=ib:dhcp:lease_history kb= 0.825195

series=ib:dhcp:network kb= 0.783203

series=splunkd_conf kb= 0.576172

series=ib:dns:query:cache_hit_rate kb= 0.380859

series=ib:dns:view kb= 0.295898

series=ib:reserved1 kb= 0.285156

series=ib:dhcp:message kb= 0.269531

series=ib:dns![]() erf kb= 0.152344

erf kb= 0.152344

series=ib:dhcp:range kb= 0.108398

series=ib:dns:query:by_member kb= 0.068359

series=splunk_version kb= 0.067383

series=ib:system_capacity![]() bjects kb= 0.027344

bjects kb= 0.027344

Comments : A bit more detailed. But still self explanatory.

Per Host:

grep group=per_host_thruput metrics.lo* |awk '{print $8, $9, $10, $11}' |cut -d',' -f1,4 |awk '$1=$1' FS="kb=" OFS="kb= " |sed 's/"//' |sed 's/"//' |sed 's/,//' |sort -k3 -nr |sort -uk1,1 |sort -k3 -nr |less

series=thor.lab.local kb= 86632.660156

series=loki.lab.local kb= 597.329102

series=baldr.lab.local kb= 315.830078

series=freyr.lab.local kb= 117.020508

series=odin.lab.local kb= 22.100586

Comments : This should be helpful in figuring our the grid member which is pushing the maximum data to the reporting server. You could think about preventive measures accordingly.

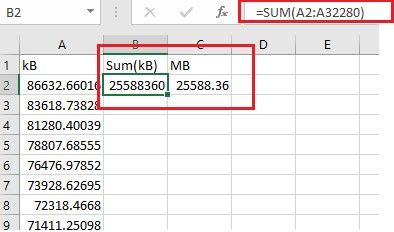

Total indexed data [Based on upto 10 busiest hosts]

grep group=per_host_thruput metrics.lo* |awk '{print $11}' |cut -c4- |cut -d',' -f1 |sort -nr >> count.csv

Comments : Just sum up everything in column A using the formula highlighted above. That is the total data indexed from 04-01-2018 06:26:37.152 +0000 till 04-03-2018 15:15:16.598 +0000

To identify the total number of violations :

grep “This pool has exceeded its configured poolsize” splunkd.log*

“I have reduced the Report Category to 60 but I don't think that will make any difference as I have gone over the limit now!”

All what you have to do is approach Infoblox Technical Support Team & request for a reporting reset license(Free of cost). They would need the hardware ID of your reporting server in order to generate the same. Once your reporting search feature has been unlocked, you can decide whether you should go for an enterprise license for your reporting server or not(Recommended in an enterprise network). Your Infoblox systems engineer would be the best person who can advise you on the limit that you should be choosing for this license.

I hope that would address your concern.

Best regards,

Mohammed Alman.